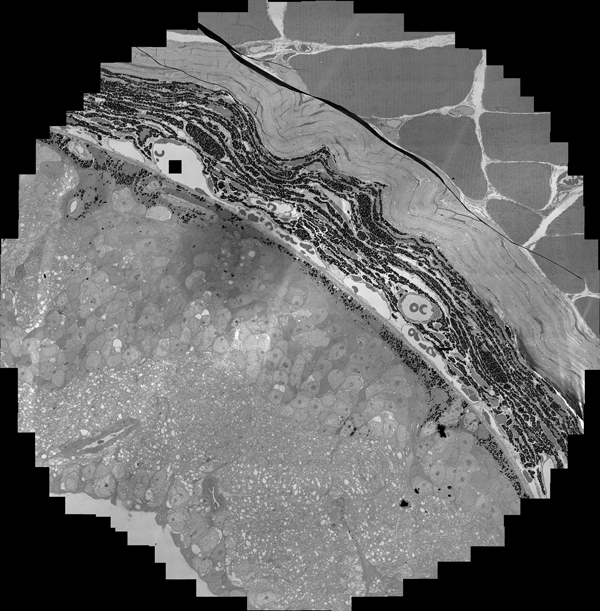

We are making excellent progress on our retinal reconstruction project. There are still some issues related to optimizing code, but we are now able to capture the EM data in a semiautomated fashion, followed by registration and mosaicing of the EM data in a completely automated fashion with image sizes of up to 128000 X 128000 pixels. The code will scale beyond that, but ~128000 X ~128000 pixels is the largest data that we’ve assembled to date.

The really impressive thing about this is that EM data has intrinsic polynomial transforms embedded in the image data that have to be overcome to properly align one image to another. It is the equivalent of optical rectilinear correction problems in wide angle lenses, but at the quantum mechanical level rather than optical. Our code can deal with these embedded polynomial warps and approximate borders within the images making for a seamless montage, an issue necessary for the reconstruction of the retina.

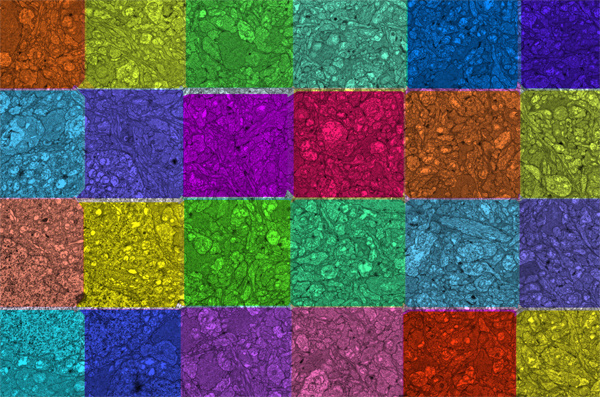

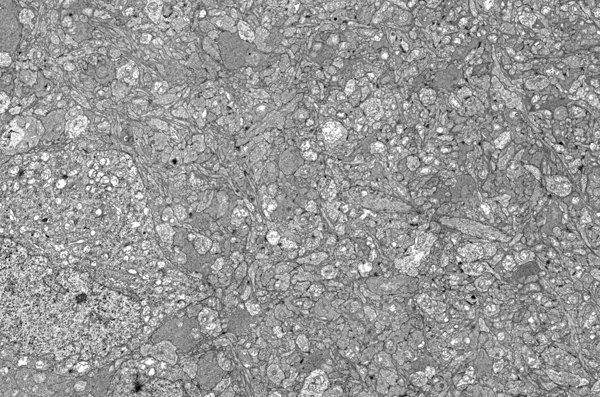

The image above is a color coded image showing the mosaics of the image immediately below. The important part of an image montage is that it gets you the resolution you need for synaptic identification to reconstruct the circuitry over large areas.

I’ve actually been toying with the idea of starting a company dedicated to complete reconstructions of tissues at the ultrastructural level. Once we have the necessary technology complete and ready for a production level environment, we will have met one of the grand challenges of neuroscience and there is nothing to stop us from reconstructing other neural tissues with identifiers built into them on cellular identity. I can easily imagine a small shop of 5-6 people that builds ultrastructural datasets for other researchers or bioscience industry in normal and pathological tissues of all sorts… not just neural tissues. Given the investment nationwide in these sorts of problems, 2-3 $Million in annual revenues to start is not unreasonable…